“Detection engineering is where security stops being passive,” said Ajay Nyayapathi, a principal security engineer with nearly two decades in cybersecurity. “It is the discipline of turning observation into readiness, so that when signals appear, the organization already knows how to see them, interpret them, and act.” For years, detection was treated as a downstream function, something that happened after tools were bought and logs were collected. That hierarchy is changing.

In modern environments, where data moves across cloud platforms, identity systems, applications, and endpoints with little regard for old organizational boundaries, the value of security depends less on collection than on interpretation. Detection engineering sits at that hinge point. It asks not merely whether data exists, but whether it is structured well enough to reveal risk before risk becomes disruption. In that sense, it has emerged as one of the least visible and most consequential disciplines in contemporary security operations.

More Than Alerts

The public image of cybersecurity still tends to revolve around alerts: red dashboards, urgent tickets, alarms demanding attention. Detection engineering addresses a quieter question. Who decided what deserved to be seen in the first place? Which behaviors count as suspicious, which patterns deserve escalation, and which signals matter enough to interrupt a human being?

Nyayapathi argues that strong detection is not just a matter of coverage, but of judgment embedded in systems. “A detection is a hypothesis about risk,” he said. “If you build it well, it helps people see clearly. If you build it poorly, it creates noise and false confidence at the same time.” That dual risk—fatigue on one side, blindness on the other—helps explain why detection engineering has become central to organizations trying to modernize security without overwhelming their analysts.

Designing For Clarity

At its best, detection engineering is an act of translation. It takes threat intelligence, operational knowledge, behavioral patterns, and business context, then turns them into logic that can be tested, refined, and reused. It also forces organizations to confront a difficult truth: many security failures are not caused by a lack of data, but by a lack of coherence in how that data is interpreted.

That is why Nyayapathi’s work has emphasized structured reasoning and clear communication as much as technical controls. Over time, he has helped mature security functions by improving workflows, strengthening operational routines, and making data more usable for those who must act on it. The underlying principle is simple enough: detection should not be an isolated artifact. It should be part of a feedback loop connecting investigation, learning, and prevention.

The Operational Payoff

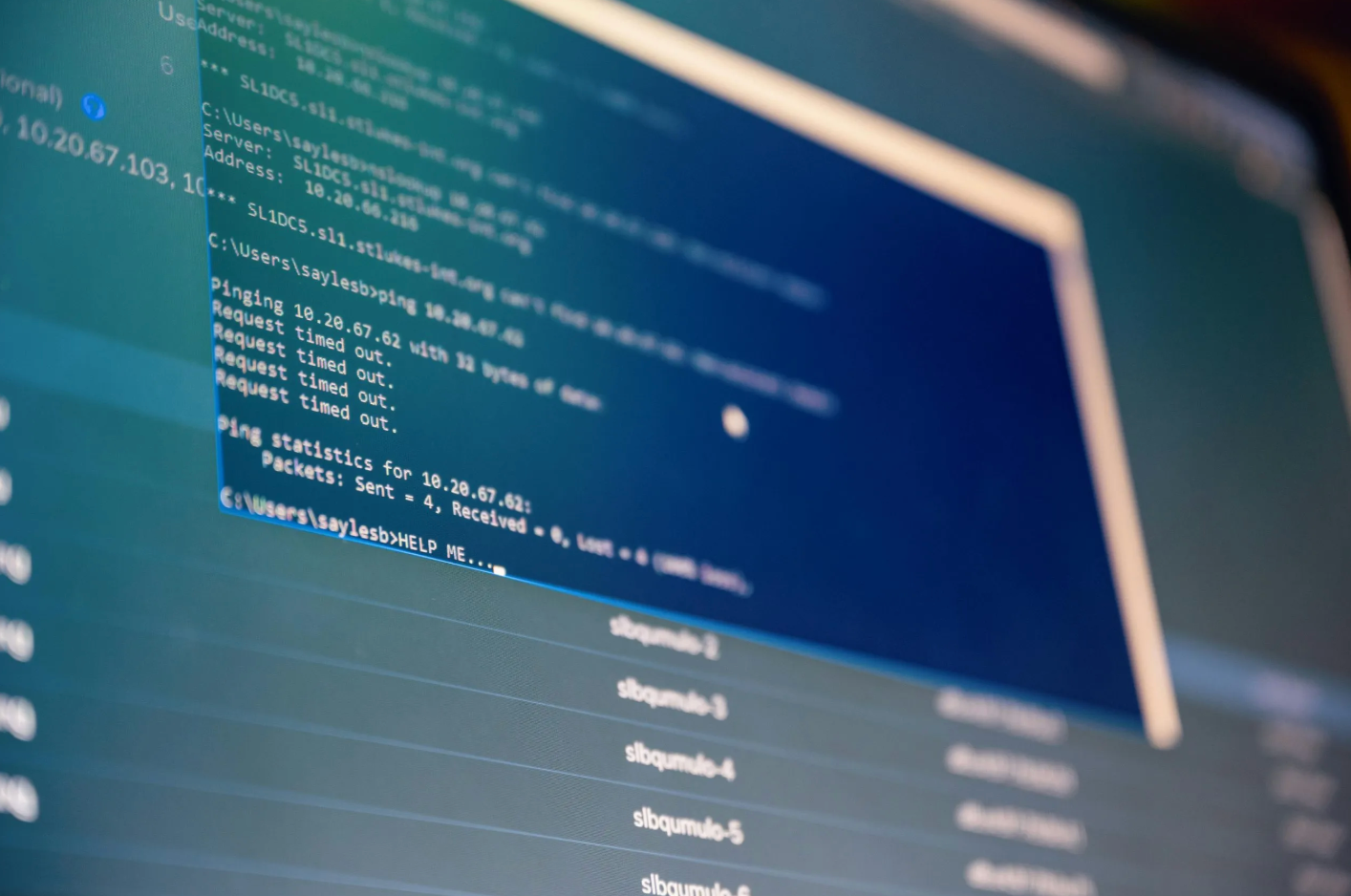

When detection engineering is weak, response becomes improvisation. Analysts spend precious time deciding whether an alert is credible, searching for context that should have arrived with the signal, and piecing together fragments across disconnected tools. When it is strong, the opposite begins to happen: investigations start closer to meaning, triage becomes more disciplined, and security teams gain a clearer sense of which patterns repeat and why.

That improvement matters beyond the security team itself. Better detections can help leaders understand risk in more concrete terms, linking technical findings to operational consequences. They can also make security programs easier to sustain, because they produce evidence that controls are improving rather than merely multiplying. In a field often accused of buying too many tools and explaining too little, detection engineering offers something rarer: a way to make security measurable without making it mechanical.

Where The Field Is Moving

The next phase of detection engineering is likely to be shaped by automation, but not in the simplistic sense that machines will replace analysts. More likely, routine enrichment, correlation, and prioritization will become more continuous, leaving humans to evaluate ambiguity rather than sort raw volume. This is one reason debates about AI in security have become inseparable from discussions of data quality, trust boundaries, and interpretability.

Nyayapathi sees that convergence clearly. “The question is not whether we can automate detection,” he said. “It is whether we can automate it in a way that preserves judgment and accountability.” His published work on prompt injection and LLM security underscores the same theme: as systems become more dynamic, the mechanisms that decide what to trust become more important, not less. Detection engineering, in that context, is no longer just a technical specialty. It is part of how institutions decide what counts as evidence.

The work beneath the work

For all its importance, detection engineering remains largely invisible outside the profession. It does not announce itself like a product launch or a breach headline. Its successes are often measured in what did not happen: the escalation that never came, the anomaly caught early, the response made faster because the signal arrived with meaning attached.

That quietness may be exactly why the field deserves more attention now. “Security resilience is built long before the incident,” Nyayapathi said. “It is built in the quality of the logic, the discipline of the review, and the willingness to keep refining what the organization believes is important.” If that is right, then detection engineering is not simply about seeing more. It is about seeing well enough, early enough, to change what happens next.